For years, Silicon Valley has been powered by slop bowls—those generic, overpriced containers of protein, wilted veggies, and mystery sauce. But the industry has evolved. We've traded the literal slop for Slop Code: containers of genericized, unstructured language patterns served up by LLMs.

Building a game with AI isn't about "prompting a masterpiece" in one go; it's about managing the transition from messy, hallucination-filled output to high-performance execution.

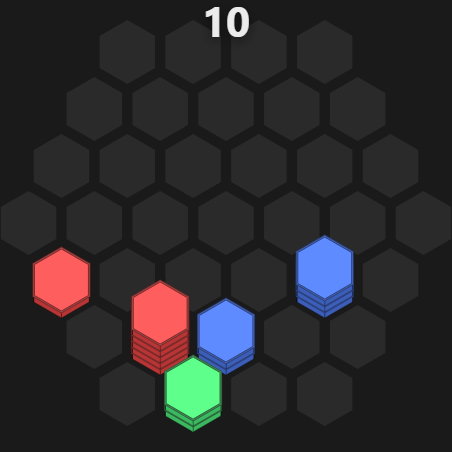

I just released Honey Hex, a hexagonal tile-sorting puzzle. It's a 100% AI-written app, from the first line of logic to the final App Store deployment. Here is the 10-day breakdown of the "Vibe Coding" lifecycle.

Phase 1: The Prototype (2 Hours)

I started in Gemini Canvas. No IDE, no boilerplate, no project structure. I used it as a high-speed scratchpad to map out the core tile-merging logic. If you can't make the math work in a browser window, don't bother opening a compiler.

Focus on the "Core Loop." In this case: dragging stacks, merging colors, and clearing the board.

Phase 2: Vertical Slop (4 Days)

Once the logic held water, I moved to Cursor for some aggressive "vibe coding." The goal here wasn't clean architecture or SOLID principles; it was producing Vertical Slop—a working vertical slice that proves the game is actually fun, even if the "under the hood" situation is a disaster.

// Representative of the "Slop" phase

public void OnTileDropped(Tile t) {

// CheckMatch ~ 100 lines of code

// UpdateUI ~ 300 lines of code

// SaveDB ~ Super slow I/O operation.

// Efficient? No. Working? Yes.

// Atomic methods? What are those?

}

This is the "Whack-a-Mole" era. You fix a UI bug, and the AI accidentally deletes your score manager. But after 4 days, I had a playable build.

The Vibe Coding Pain Points

Working with AI agents isn't all sunshine and auto-complete. It's a constant battle against context drift and tool failure. A few highlights from the trenches:

- God Class anti-pattern: Vibe coding gravitates toward one file that does everything—merge rules, UI, persistence, ads—until every fix risks breaking ten other features.

- Infinite debugger loop: Left to its own devices, the agent would spin up the app, open the html page, view the page as an image, misread the output, and burn through tokens or get confused.

- Emojis: Why is this a thing? My IDE should not have the writing style of a 12-year-old.

Phase 3: The Release & Data-Oriented Architecture (4 Days)

The last four days were about solutions, not another vibe pass. Mobile does not forgive slop—frame time and memory are the scoreboard—so the work was to give humans and agents a system they could change safely.

Core philosophy: separation of concerns; keep each task's context small while leaning on abstractions that hide detail. The model should read contracts and data shapes, not entire monoliths.

- Aggressive testing: Fast unit and integration tests are your safety net. Think in layers, and test everything. Honey hex has many hundreds of tests. With them, a broken refactor shows up as something to fix in seconds.

- Empirical coding: Don't let the agent guess. Give it a metric (frame time, allocations, test pass rate) and a levers to pull (pool size, batch size, algorithm flag). Change one thing, measure, repeat. It turns a hallucination machine into a tuning loop.

- Data-oriented architecture: Plain arrays, tight structs, shared layouts. The agent performs best when it's reading simple data shapes—not untangling your domain model. Keep the hot path dumb and the logic portable.

- Write to an interface: Build self-documenting interfaces containing just the method signatures and data shapes. This gave the agent a lightweight map of the entire system without flooding its context window with thousands of lines of code.

- Write CLI tools when needed: For rapid debugging, I wrote a small skill and python script to run the app and fed the console logs directly into the agent's context. Less information, sharper signal. The agent stopped guessing and started fixing.

Conclusion

The result is live at honeyhex.xyz. AI is an elite prototyping engine, but it's a terrible architect. Use it to generate the slop, then use your "Senior Dev" brain to turn it into something performant.